In May this year, I wrote about how AI is changing documentation, a few months into the proliferations of LLMs. At the time, I argued LLMs had not yet made much of a dent in the documentation landscape, due to a couple points:

- AIs making things up is a big issue and especially problematic for documentation

- Without knowing how your product works, LLMs can’t write meaningful, quality documentation by themselves

I argued that AI would in the short term be most helpful in documentation as a writing assistant, and that we are not yet replacing technical writers with AI.

But it’s now September 2023. Have things changed? Let’s do a quick review.

AIs chatbots for documentation

The first obvious use case for LLMs was being able to ask questions about your documentation from an AI chat bot. This could be extremely useful. Readers famously rarely read the documentation in detail, so maybe an LLM could help surface relevant information. Or perhaps an AI could even generate customized learning paths for the reader on the fly, based on the reader’s experince.

We’ve seen lots of companies add LLMs to their docs this year, Supabase being an early example from the beginning of the year with their Clippy helper.

Has anything changed? Are the responses better?

Not particularly.

Anecdotally, AI documentation chatbots have the same issues as in the beginning of the year: not finding answers that are not explicitly mentioned in the corpus, misunderstanding the docs, or making things up.

In the previous post I went through some of the technical details about why these issues exist, and there haven’t been any AI research breakthroughs that have changed things.

When AI assistants backfire

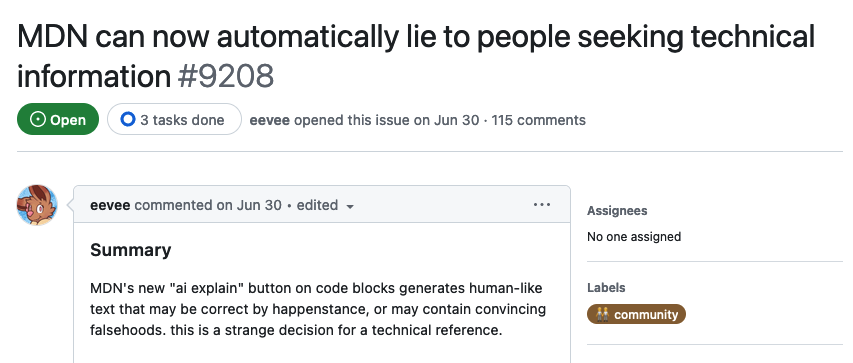

We now have a very public example of AI documentation chatbots failing in the wild from Mozilla and their MDN Web Docs, which describe open web technologies.

They introduced an AI Help feature in late June, that lets you ask questions about the reference documentation.

GitHub user eevee opened an issue just a few days later showing how the new AI helper is producing incorrect answers. There are a number of examples listed in the long list of comments.

What is interesting about this example is that MDN was like part of the OpenAI training corpus that GPT-4 was trained on. Still, it was not able to produce correct results enough of the time. GPT-4 knows HTML, CSS, and JavaScript, but still ran into issues.

Your product was likely not part of the training set, so don’t expect better results than this.

Non-expert in the loop

What makes documentation chatbots such a tough case for LLMs is that the user is usually, by definition, a non-expert. They do not necessarily know when the LLM is talking nonsense.

Imagine you had a support agent for your company that just made things up 5-10% of the time. That wouldn’t be acceptable.

Let’s look at an example of a similar use case that does work: LLMs helping lawyers and other knowledge workers understand complex contracts. You can load up large documents into LLMs and ask questions about the terms of a sales agreement, for example.

One such vendor is Box, which has introduced these kinds of AI features to their suite of products.

Sounds very similar to documentation search, but with an important difference: the user is an expert. They can recognise when the LLM is incorrect, while still enjoying a productivity benefit from the tool most of the time.

Surely it’s not all bad?

One issue I pointed out in May was that AI search was sloooooow. Especially the larger GPT-4 based models took a long time to generate tokens.

Well, that has (predictably) changed!

GPT-4 is significantly faster than it was just a few months ago, and this is a great usability improvement. I tried looking for data on this but OpenAI does not seem to publish speed benchmarks (at least that are easy to find). So you’ll have to take my word for it: GPT-4 is (anecdotally) much faster.

But is this enough to make AI-powered documentation search 10x better than traditional search? Given the other issues we’ve mentioned, my verdict is still a “no”. We haven’t seen foundational changes to LLMs that would solve the issues I discussed in May.

AI for writing docs

The promise of AI writing all your documentation for you has not materialized.

This is especially true for larger product documentation and tutorials. While it is possible on a function or module level for source code, writing accurate, helpful, and consistent documentation for a complex technical product is still outside the capabilities of LLMs.

But assisting professional writers do exactly this is something that LLMs are well suited for!

This is where the recent advancements in AI are much more useful. If you are a technical writer, developer advocate, or product owner writing documentation, LLMs can be fantastic assistants. They help you rephrase content, produce outlines, keep a consistent voice across your docs. They’re a great cure for blank page syndrome.

Depending on what tools you are currently using, you may have an AI integration already in your writing environment, but personally I tend to spend time in the ChatGPT interface when writing all sorts of content - not just documentation.

There will likely be more innovation in this space aimed at technical writing specifically in the future.

Are your docs AI-ready?

OpenAI announced ChatGPT Plugins in March 2023, which let ChatGPT fetch information from external live data sources.

The question I posed in May was, are we going to need to ensure our docs are easily accessible for LLMs, and if so, what does that mean in practice?

This was speculative then, and still remains so today. There certainly has not been a proliferation of autonomous AI agents collecting information or performing tasks for their users, and which would need to query your docs in order to complete said tasks.

If this does come to pass, an API references and OpenAPI may end up playing an important role. (Yes, OpenAPI vs OpenAI is confusing…)

Hear me out: a standard “documentation” endpoint for every API that returns clear and concise API documentation for the purpose of feeding into an LLM to generate API queries.

— Yohei (@yoheinakajima) September 3, 2023

I believe this tweet (or is it an “x” now?) misses the fact that we already have such a tool: OpenAPI.

OpenAPI is a great way to describe how your API works to an LLM. In fact, OpenAI plugin manifest files require an OpenAPI spec that ChatGPT then uses to call the service behind the plugin. It kind of sounds like magic: you just provide a JSON blob describing the endpoint and the AI figures out the rest.

Here’s a key point from the docs:

The model will see the OpenAPI description fields, which can be used to provide a natural language description for the different fields.

It may be important for your API references to include well written usage instructions, so that LLMs can use them properly. For example, what order do we invoke the operations in?

Conclusions

I think my overall opinions have not changed since my first post in May:

- AI docs chatbots are not particularly helpful and are occasionally borderline problematic

- AIs are great tools for helping writers produce content

What I hadn’t considered before is the possible importance of OpenAPI references for describing APIs to LLMs. We will have to see how this develops.

GPT-5 is expected to be released at the end of this year, bringing with it at least another order of magnitude in computational power to the bleeding edge of LLM development. We will have to see what new capabilities it brings, or if we will see signs of hitting the limits of current transformer-based architectures.

It still remains to be seen how AI is going to change knowledge work in the coming years.

Articles about documentation, technical writing, and Doctave into your inbox every month.